We keep funding the wrong thing.

Across boardrooms, grant reports, and government dashboards – the question being asked is always the same: How many devices were distributed? How many learners were enrolled?

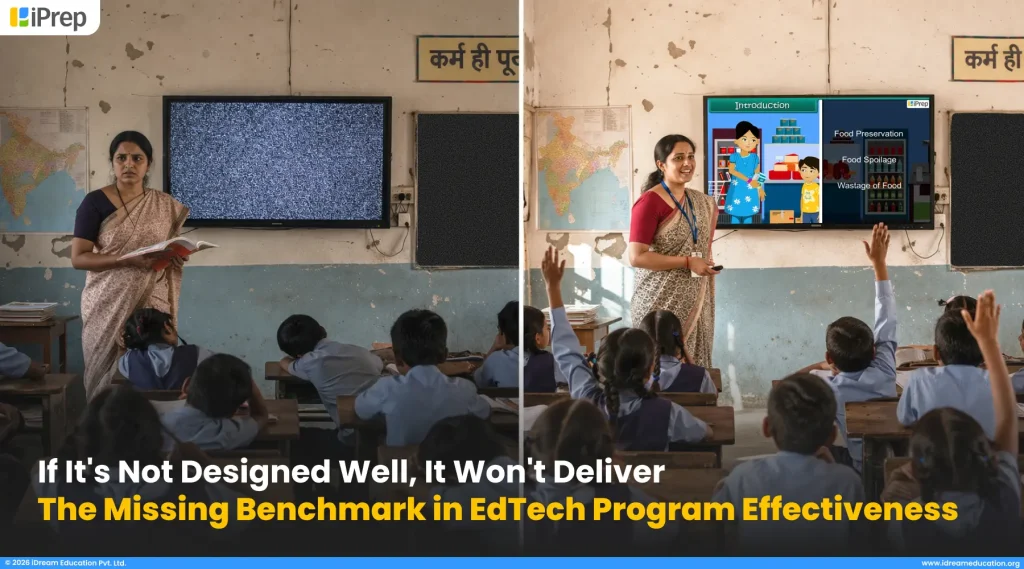

And yet, classrooms remain disengaged. Learners drop off. Skills don’t transfer. Communities stay exactly where they were before the program arrived.

We have invested billions into EdTech, celebrated launches. We have taken photographs in front of screens. But somewhere between the press release and the ground reality, something is breaking down & most of us are looking in the wrong direction to find it.

The problem is not the technology. It never was.

The real gap: the one that rarely makes it into a program proposal, a CSR impact report, or a policy framework is EdTech program design. Not what tool you deploy, but how the entire intervention is architected. Who it is built for. What behaviour it is trying to shift. What support structures surround it. How usage will be ensured, how it is aligned with classrooms, what content would be enabled? How usage would be tracked & how teachers will be guided?

EdTech program effectiveness is not a technology problem. It is a design problem.

And until we treat EdTech program design as a non-negotiable benchmark, not an afterthought, not a slide in a pitch deck – we will keep funding interventions that look impressive in reports and deliver very little in real lives.

This is not a critique of technology. Technology, when placed inside a well-designed programmatic intervention, can be genuinely transformative. But right now, we are handing powerful EdTech tools to people without the scaffolding, the pedagogy, the human touchpoints, or the contextual relevance that makes those tools actually work. “We are building roads and forgetting to understand where people need to go.”

There Is No Benchmark. There Would be No Design And That Is the Real Problem.

Here is something nobody in the EdTech funding ecosystem wants to say out loud: There is no benchmark for program design. There is no standard. There are no specifications.

When a project team funds an EdTech initiative, they will ask for a budget breakdown, a beneficiary count, and a timeline. When a government procurement happens, there will be technical specifications for the device: screen size, battery life, operating system.

But almost nobody asks: How exactly will this program be designed and delivered on the ground? And the silence around that question is costing us outcomes at scale.

A story from the ground highlighting gaps in EdTech Program Design

In one of our projects, we conducted district-level teacher training. On paper, it looked efficient – one training event, 1-2 teachers from every school in the district, maximum reach.

But here is what the design actually assumed: that each teacher, having attended a single session, would return to their school and cascade that training to every other teacher in the building.

That never happened. It could not have happened.

Because, there was no structured plan. No follow-up visit. Plus, no support material for the teacher to carry back. No one checked whether the knowledge had actually transferred from the trained teacher to other teachers in the school.

The result? Half the solution sat unused.

The platform was there. The devices were there. Even the content was there but teachers had never been shown how to integrate the technology into their lesson plans – into their pedagogy. And without that, the EdTech was just furniture. & this is just one such case.

The questions that should be mandatory for EdTech Program Effectiveness – but never are.

When any EdTech program is proposed – whether it is a CSR initiative, a government scheme, or an NGO-led initiative, the following questions should be non-negotiable specifications:

- How will the program be implemented at the school level, step by step process?

- How will teachers be trained, not just once, but continuously?

- How will pedagogical integration be supported, so teachers know how to connect the technology to their lesson plans?

- What does in-school support look like? Who visits, how often, and with what purpose?

- What does online support look like when a teacher is stuck at 11AM before a lesson?

- What does offline support look like for schools with no connectivity?

- How will teacher usage data be tracked and shared – not just at review time, but every month?

- What happens when a school goes three weeks without engaging the platform?

These are not implementation details. These are design specifications. And right now, most programs are funded and launched without a single one of them answered clearly.

Why does this gap exist?

The honest answer is that the sector has been far more comfortable evaluating what it can see such as devices, enrollments, content libraries. EdTech program design is invisible until it fails. And by the time it fails, the funding cycle has usually moved on.

But there is also a structural problem. Funders do not demand design benchmarks because no standard exists to demand against. Implementers do not build rigorous design frameworks because no one is asking for them at the proposal stage. And governments procure technology with detailed hardware specifications while leaving the human delivery architecture entirely undefined.

The Result: We are very good at specifying what we are buying. We are very poor at specifying how it will actually work

This has to change. Not through more reporting. Not through better dashboards. But through a shared, sector-wide insistence that EdTech program design should have a benchmark – with clear specifications, minimum standards, and accountability built in from Day 1.

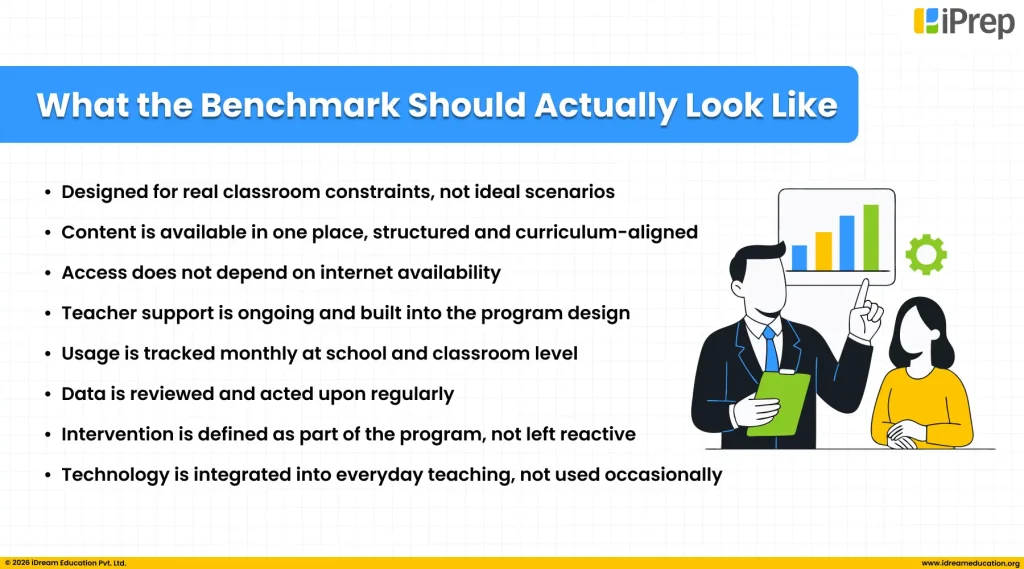

What the Benchmark Should Actually Look Like

If we agree that program design is benchmark, the next question is: benchmark against what?

Not against what looks good in a proposal. Not against what is easy to report. But against what the ground actually demands — the classroom, the teacher, the learner, the community, and the very real constraints they live within every single day.

Here are the principles that should form the foundation of any EdTech program design benchmark built not from theory, but from grassroot reality:

Content Enabled should be in one place and structured

A teacher standing in front of 40 children does not have the time or the bandwidth to hunt across multiple platforms, apps, and folders to find the right content for that day’s lesson. And yet, that is exactly what most EdTech programs silently ask them to do.

Therefore, a critical benchmark for EdTech program effectiveness is structured content at one place. Every program must ensure that the right content, mapped to the curriculum, sequenced by grade and subject, ready to use is available to the teacher in one place, with no searching, no switching between sources, and no dependence on a good internet connection to access it. Because the majority of schools that need EdTech the most are also the schools with the least reliable internet. If a teacher cannot walk into a classroom and run a lesson without a signal, the program has not been designed for the ground. It has been designed for a demo. Every benchmark must ask: is content pre-loaded, curriculum-mapped, and accessible offline, so that a teacher can teach with confidence, every single day, regardless of connectivity? If the answer is no, the design is incomplete.

Teacher Training & Support Should be a Continuous Commitment

A teacher trained once is not a teacher equipped. Real capacity building means structured, ongoing support – before the program launches, during implementation, and when things go wrong or they need support. Every EdTech program must define, upfront, what teacher support looks like across the full program lifecycle. Not a single orientation. Not a helpline number. A real, designed support journey with in-person visits, peer learning touchpoints, usage monitoring, retraining, personalized support, and a dedicated personnel who is specifically responsible for ensuring no teacher is left behind and gets support when needed, so that no day goes by where technology in the classroom is unused because of any situation in any education program.

Pedagogical integration for everyday use of Edtech solutions.

Technology placed next to teaching is not the same as technology woven into teaching. Every EdTech program must define exactly how the digital tool connects to what is already happening in the classroom – the lesson plan, the curriculum, the assessment cycle. Teachers need to be shown, practically and repeatedly, how to bring EdTech into their pedagogy, not as an add-on at the end of a lesson, but as a living part of how learning happens. Without this, even the best platform becomes a screen that gets switched on twice a week and ignored the rest of the time.

Usage data must be tracked every month, not every quarter

School-level usage data is where the real story lives. How many active days in a week? In which classrooms digital content is being used and which are not? Which learners are falling behind the usage curve? What content categories are most used? Which subjects are being engaged with and which are being ignored? How frequently is each category being used, for which subjects, and what correlations are emerging across classes/schools?

This data should not be collected for an end-of-year report. It should be tracked monthly, shared with the right people, and used to make decisions in real time. Your program implementer should also be able to act on this data – to improve usage, course-correct on the ground, and guide teachers and schools before small gaps become large failures. Every EdTech program design must specify what data will be collected, at what frequency, by whom, and who is responsible for not just reading it – but acting on it.

Monitoring is not enough. Intervention must be designed in.

Knowing something is going wrong and doing nothing about it is not monitoring – it is observation. A well-designed EdTech program does not just track usage. It defines, in advance, what triggers a response. If a school has not engaged the platform in two weeks – who is notified? What happens next? If a teacher’s usage drops suddenly – is there a support visit built into the design? Intervention must be a named, structured part of the program architecture. Not a reaction. A design decision made before the program ever launches.

These are not aspirational ideals. There are minimum design requirements to achieve edtech program effectiveness. Any EdTech program that cannot answer clearly to each of these principles at the proposal stage should be redesigned before it is funded. Because a program that is not designed for the ground will not deliver on the ground. No matter how good the technology is.

We Do Not Write This From the Outside.

The principles shared in this blog are not a wishlist. They are not borrowed from a framework written in a conference room far from a classroom. They are what we have learned from years of designing and implementing EdTech programs with schools, teachers, & our CSR/NGO/Govt. & other partners who serve them.

Every benchmark outlined here: continuous teacher support, structured and offline-ready content, pedagogical integration, monthly data tracking, and designed-in intervention is part of how we approach EdTech program design in our own work. Not as a rigid formula, but as a living practice that we refine with every program, every partner, and every piece of ground-level feedback we receive.

We are learning too. Every implementation teaches us something new about what works, what does not, and what needs to be redesigned. But what we know with certainty is this: When these practices are in place, when program design is treated with the same seriousness as technology selection – the outcomes are different. Teachers show up differently. Learners engage differently. Data tells a story worth acting on.

And equally important, none of this is one-size-fits-all. Every stakeholder we work with brings a different context, a different community, a different set of constraints and ambitions. Our program design is always customized to the geography, to the learner profile, to the institutional capacity of our partners, and to the specific change each program is trying to create.